Point and Shoot to Search, Learn and Shop

You’ve searched online for an image using words to describe what you’re looking for, but how do you search for something you can’t put into words? Sometimes a picture can convey a message more effectively than a written description. After all, a picture is worth a thousand words. We already take pictures of all sorts of things. Now, thanks to recent advances in visual search technology, those pictures alone can be used to perform searches. Whether you’re trying to identify an object, determine what’s wrong, find a coordinating piece or learn where to buy, visual search could provide the best answer.

What is visual search?

Visual search uses an image or part of an image as a search query instead of text. The image can come from anywhere – a webpage, app, your saved photos or a real-time image on your screen in the camera app. While it only accounts for a small portion of searches, visual search is gaining popularity and is expected to continue growing rapidly for the foreseeable future.

Volume for visual search is currently estimated at 1 billion searches per month, which pales in comparison to the hundreds of billions of text-based searches. But, that’s still pretty impressive when you consider only about 27 percent of US internet users are even aware it exists, according to a 2017 survey by Toluna and the National Retail Federation.

Explosive growth is anticipated, especially in relation to digital shopping. An August 2018 study by ViSenze found that the ability to search by image topped the list of new technologies millennials would be most comfortable with as part of their digital shopping experience. That same study also showed 62.2 percent of Generation Z and 61.7 percent of millennial consumers want search capabilities that would “enable them to quickly discover and identify the products on their mobile devices.”

How does it work?

Visual search platforms interpret the image queried using image recognition tools and return results in the form of other images as well as text-based responses. Not only correctly identifying the image but also determining the implicit question the user wants to answer has been notoriously technically difficult. For example, an image of a pomegranate could be a query looking to identify the fruit, how to grow them, where to buy them, find recipes or learn about the health benefits. Thanks to artificial intelligence and a growing library of data and images, accuracy of visual search responses is improving.

When is visual search useful?

Visual search works in tandem with textual search; the two are complementary not competitive since each fulfills a different need. Visual search is ideal for queries that are hard to verbalize or don’t make sense without an image, such as “What goes well with these pants?” or “What kind of car is that?” It’s ideal for questions in these key categories:

- “What is this?”

- “How can I replace this part?”

- “What goes with this?”

- “What does this mean?”

- “Who is this?”

- “What’s wrong?”

Key visual search platforms

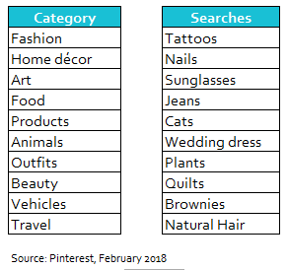

With over 175 billion pins on more than 3 billion boards and counting, it’s no surprise that Pinterest is one of the top visual search platforms. Although Pinterest launched its first image search options in 2015, its core visual search tool, Pinterest Lens, was introduced in beta just last year. Lens gives users the ability to search using saved photos or real-time images from their camera. Thanks to “responsive visual search,” it’s also possible to zoom in to search only a specific part of an image. So, what are people searching for?

Pinterest Search Trends

Amazon

Remember the Fire Phone? Me neither, that’s because it was a flop. But, its visual search tool, Firefly, was one of the first developed and that technology is now available to other companies through Amazon’s Rekognition platform. In fact, Pinterest uses it to interpret text within images. It’s also the muscle behind Snapchat’s visual search tool that allows users to point their camera at an object then press and hold the screen to see an Amazon product card pop up.

Google has been using visual search to tag the images and videos it crawls for years, but only recently went all-in with the beta release of Google Lens in 2017 as part of a broader strategy to make search more visual. What sets Google Lens apart is the limitless range of questions it can address since it’s actually a general search engine itself. Planning a trip to a foreign country? Be sure to have the Lens app installed. With its translation capability, you can simply point your smartphone camera at written text or a sign in a foreign language and see a translated version. You can also scan an area with the camera, without taking a photo, and Lens will display results for objects it has identified. See someone wearing a pair of shoes you just have to have? Snap a picture and Lens will link directly to a product page or show you just the right outfit to pair them with.

eBay

eBay has been busy developing search tools as well, including Image Search and Find It On eBay. Image Search allows smartphone users to take a picture of an item and search for it on eBay, or search product reviews and recommendations based on an image found on another site. Similarly, Find It On eBay allows users to send images found elsewhere online to eBay for analysis and recommendations. Drag and drop features let users drag anything on eBay into the search bar to find related products.

Despite its sophisticated image recognition ability, Facebook is yet to join the visual search race. As of now, Facebook has limited its technology to tagging photo content to improve text-based image search results. But, it’s bound to eventually, given the visual nature of Instagram and the core role of the smartphone camera across its properties.

What does the future hold?

Currently there is no advertising within visual search, but the technology is becoming increasingly important. Now is the time for brands to build and catalog their product image libraries and create links from images to the most relevant landing pages. Thinking about the variety of actions a user might take or questions they may have after seeing an image and creating ways to address them is also essential.

Visual search is changing the way consumers find products and information. It’s part of a virtuous cycle – as awareness grows and usage increases, accuracy will improve. Improved accuracy will fuel extended use, making a picture not only worth a thousand words, but a thousand search queries.

More from Mindstream Media Group

Meet the Mindstreamer – Chandler Swanner

Chandler Swanner’s interest in advertising dates back to her childhood. Her mother (and role model in life) was a Media […]

Third-Party Cookie Phase-Out: What Marketers Need to Know

Cookies are an essential part of internet usage, allowing websites to remember you and provide a more personalized experience. This […]

Meet the Mindstreamer – Kaya Bucarile

She plans and oversees media strategy for agency clients, working closely with project and platform managers to ensure that we […]